I'd like to discuss my journey with A.I., from the existential dread that consumed me a few years ago to the reframe and the art I'm now making to find meaning in a post-A.I. world.

My name is D. Anson Brody. I'm an artist working in music and photography. I'm fortunate to have had a long albeit very small career in the arts. Like many artists I sacrifice a lot to pursue a creative life of craft, learning, and expression, with times of hard pushing towards a goal, and other times where the creative fields need to lay fallow. So many years traveling, sleeping in the car, and on couches, eating ramen, destroying my health, and sacrificing relationships all for the meaning I find in my work. In 2017 I first had a thought that has plagued me ever since. If humans beings couldn't tell the difference between the work of a human, and the work of A.I., then society craving ever more convenience would default to using the AI's work. If artificial intelligence could listen to recordings of me, read my poems, digest my photos, and social media posts, it could in theory be prompted to mimic my work well enough that others couldn't tell the difference. What purpose would I have left if no one cared to tell the difference?

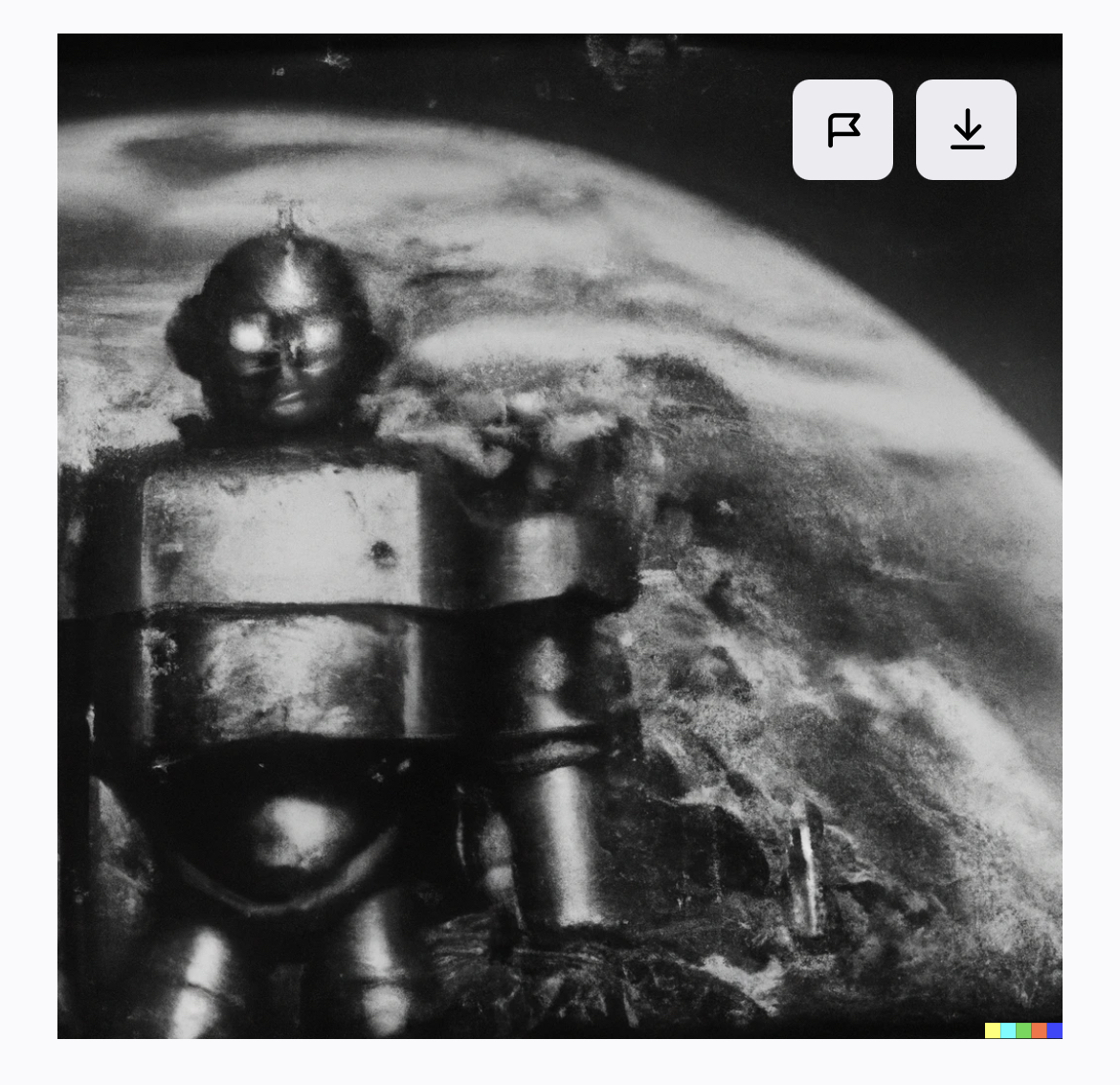

Now we come to 2021, and Dall-E, the first text to image generative AI, and it freaked me out! The thing I'd feared was finally here. It wasn't advanced, but I knew it was only the start. Sept 2022 I got an invite to the beta version of Dall-E 2. My curiosity got the best of me. I had to try it and see what it would render from my prompt. I typed in something like “19th century photograph of old style robot taking over the world” and after some iteration it spat this out.

First Dall-E image

Then I really got more specific and asked for the same thing but added different variations like “looming from behind the earth menacingly”, and eventually got this.

Second Dall-E image

I saved these screenshots and messed around with a few other ideas, and then never really messed with the idea or Dall-E again. Until earlier this year, (2023) I was walking through an antique store in Three Oaks, Michigan and I saw this vintage robot toy from Japan. Parts of it were missing but it reminded me of that image I'd prompted from Dall-E a year or so earlier. I thought, I'm going to make a photo about AI, inspired by AI, but in a handmade 19th century style plate. I'll use an older analog medium to frame the past and talk to a digital future all while expressing this current moment in society and within myself regarding AI.

Robot toy at the antique store.

One of the earliest photographic methods, dating back to the 1850s, a tintype is a direct positive image, exposed through a wet chemical process directly onto a thin piece of metal. The size of the large format camera determines the tintype's maximum size, while the characteristics of the chemical process and hand applications add artifacts and uniqueness. The finished product is a unique metal plate with the image composed of the contrast between the black metal, and highlights made of pure metallic silver. It's a medium that is physical first and digital (with scanning) later, if ever. Much of a tintype's unique reflective and moody character can not be admired through a computer screen. They are much better experienced in person.

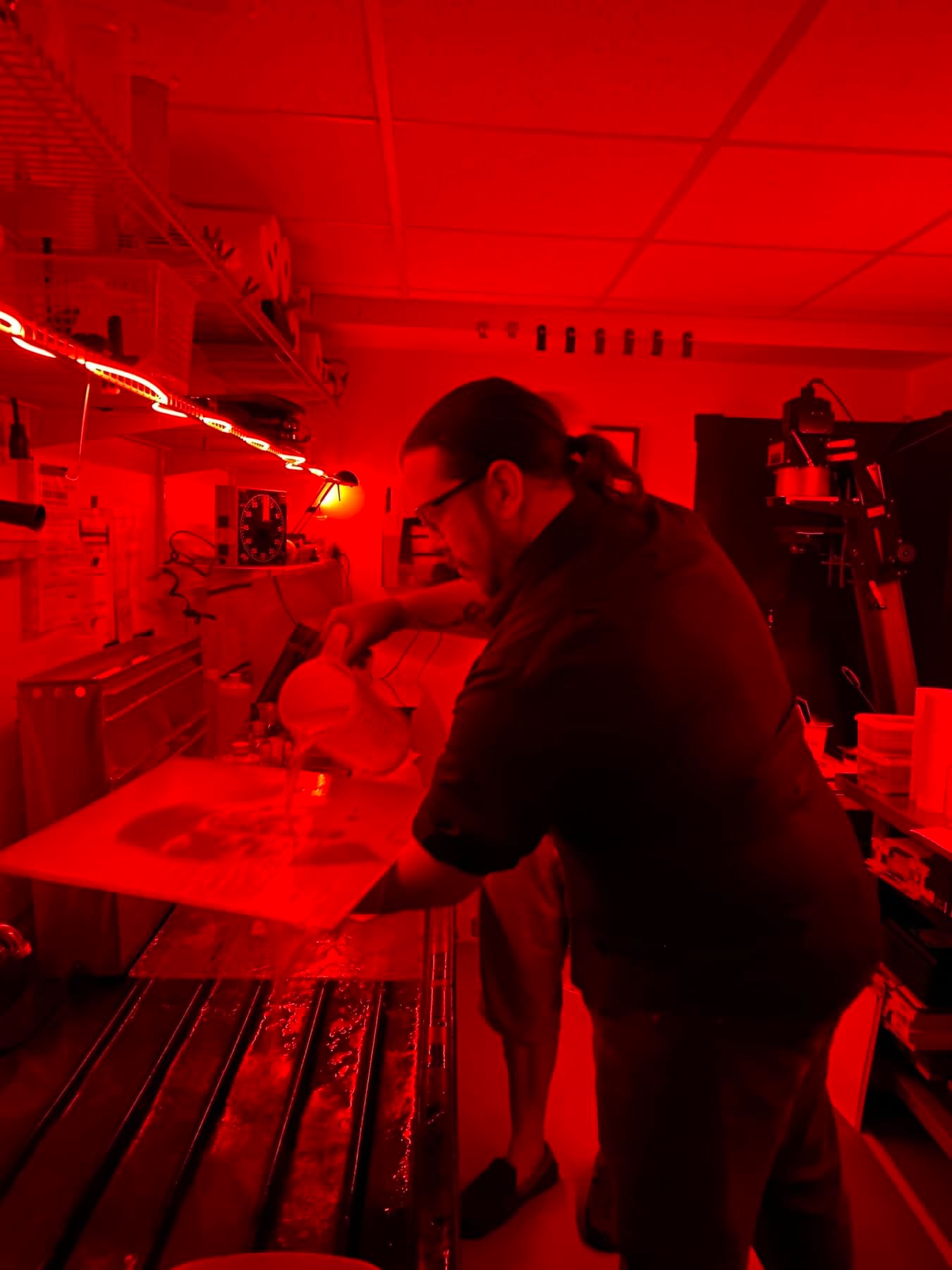

I initially visualized this shot in a different 19th century process called dryplate. Coincidently I ended up taking a refresher tintype class with alternative process friend Doug Hanson. At the time I saw it as something fun to do on a day off. Though I'd made some tintypes before, I had kind of dismissed the idea of ever doing them seriously because I preferred my dryplate look and versatility. During Doug's class I got to see some of his original 16x20 tintype plates. They were nicely lit where the room was a bit darker than the light on the print. The way he shot them made me fall in love with the moody and somewhat spooky look. Something about the the bigger scale and Doug's lighting really captured my heart and imagination. During the class Doug suggested that I shoot the big camera because he could see that I was really into the big plates on the wall. I told him about an idea for a self portrait, and with his help with I poured and developed my first 16x20 tintype plate. It was also my first ultra large format camera experience. I knew when I saw it come up in the fixer that this was how I wanted to shoot my toy robot, and that I wanted to do a continuing series commenting on AI in this format.

Making my first ultra large format tintype-16x20

So I started making test shots after getting everything I needed together, and got into a groove after some practice. I started taking different kinds of portraits of the robot toy. The toy is only about 12" tall total so these close portraits were technically macro shots with an 8X10 camera which meant it took tons of bellows, which equated to several stops worth of light loss. On top of that I knew the depth of field was going to be a lot thinner at 16x20 so I was testing for F16 even on the 8x10. Tintype being an older photographic technology it's very insensitive to light, roughly iso 1 on average. Luckily the robot and the globe are inorganic still life subjects so I could hit them with strobe light power over and over as needed. Took 30 pops of the flash with a couple of 4800ws strobes to get a perfectly exposed plate at F16.

So I started making test shots after getting everything I needed together, and got into a groove after some practice. I started taking different kinds of portraits of the robot toy. The toy is only about 12" tall total so these close portraits were technically macro shots with an 8X10 camera which meant it took tons of bellows, which equated to several stops worth of light loss. On top of that I knew the depth of field was going to be a lot thinner at 16x20 so I was testing for F16 even on the 8x10. Tintype being an older photographic technology it's very insensitive to light, roughly iso 1 on average. Luckily the robot and the globe are inorganic still life subjects so I could hit them with strobe light power over and over as needed. Took 30 pops of the flash with a couple of 4800ws strobes to get a perfectly exposed plate at F16.

I ended up with a clear glass globe with reflective mirror-ish continents. I setup the shot and made tests to get the light and composition right. I ended up scrapping the silver mirror continents off of half the globe to get the light the way I wanted it on the face, the paint was blocking light and creating shadows I didn't want, but the metaphor of having to destroy half of the globe to shine a light on AI wasn't lost on me. This also had the added effect of making the clear areas of the globe glow like light was pouring out from inside the earth, which I loved. I found an adjustable magnet in my “ I might need that again someday” bin to hang the robot at an angle looming over the globe.

The Dall-E image that initially inspired this framing was too menacing. I wanted to express anxiety, not fear. Lighting up the toy's eyes from behind was far too much in my experiments. I wanted the robot's face to feel “scary” like my dad with the flashlight under his chin around the campfire, not like a glowing eye'd monster in the woods waiting to get me. The robot toy's face is much more charming than in the Dall-E image so that helped. The specular highlights in the robot's eyes have enough life in them, without evoking fear, and the contrast levels feel more like an old sci-fi movie poster. Similar to one of my all time favorites “The Day the Earth Stood Still”, scary in it's themes maybe, but charming and growing a bit camp with it's age.

Poster for "The Day the Earth Stood Still"

After getting a satisfying test plate in 8x10 I came back to Doug's place to shoot with his massive camera. The plates, the chemicals, the holder and the camera were all terribly cumbersome and difficult to work with compared to shooting in 8x10. Everything had to be done slowly. It took all day to make 3 attempts. The 3rd of which was perfect.

Final 16x20 plate image

New 16x20 plate camera.

Now that I've made my first plate towards this project, I've invested in a camera setup to be able to see it through. This 16x20 plate camera was designed and built by Doug Hanson. He says he's not really a camera builder, but he made an extra when he built his 16x20 for himself.

Final Notes: While working towards this project there's been a lot of discussion about how AI scrapes from other artists. I think artists have every right to be angry and feel cheated by tech companies, much of the tech world gained it's power from clepto-cracy in my opinion. I make exception for myself in regards to this blog, though I'll admit it's a bit self serving. We need to see if AI can be fixed and integrated. We need to see how artists react to it's invention and integration into society. I think I played within the boundaries of curiosity, and used the inspiration ethically to make something that expressed something deep within myself for the tangible world. Maybe you disagree, it all definitely still feels anxious.

I want to Thank Doug Hanson for his mentorship with this medium, and I want send a quick shout out Shane Balkowitsch, another artist working in wet-plate, for his insights and blog posts about the relationship between photography and these AI images generators.

I keep thinking of this clip of Cher talking about how she spent her whole life crafting her music, and well…herself, and someone used AI to mimic her voice and style singing Madonna songs. She felt that was too far, because she fought all her life to be exactly her, and I really felt that. Are the seeds and first fruits of this project the same, too far? Is it even important to make distictions and draw lines in grey areas or is AI the enemy of human artists? Pandora's box is open, AI is here and we'll have to deal it somehow. To add another layer of convolution to this project I even used Open AI's Chat GPT to come up with the outlines on how I should write this blog, and asked for it's help along the way in making my writing more clear and concise.

I encourage readers to share their own experiences and thoughts on this topic, please leave comments, feedback, vitriol, ect. below.